I don’t think capitalism is necessarily at fault, nor must the working/middle classes be struggling for fascism to emerge. If anything, quite the opposite. It is the better off countries that end up turning fascist. All fascist countries are/were first world countries, in various states of advanced development.

That’s not right, at least not for the fascist regimes in Europe that emerged prior to WW2. The countries where it happened (specifically Germany/Italy/Spain) had all seen civil unrest or even civil war in the recent past, they were hit hard by the global financial crisis in the twenties and had high unemployment and widespread poverty. This was the very thing the fascists used to ingratiate themselves to the public at large, by creating jobs through massive public building and rearmament projects.

By the way “first world countries” is post-WW2 terminology and didn’t originally have a connotation of superior economic status, but was referring strictly to ideological alignment. Whether a country belonged to the capitalist/communist/unaligned block in international politics during the cold war.

I got 800 hours of fun

I have no skin in the game

Well during that time you will have lost quite a lot of skin cells, so in a manner of speaking you literally have some skin in this game. Around 328 grams going by an average loss of 3.6kg/year I took from some random website.

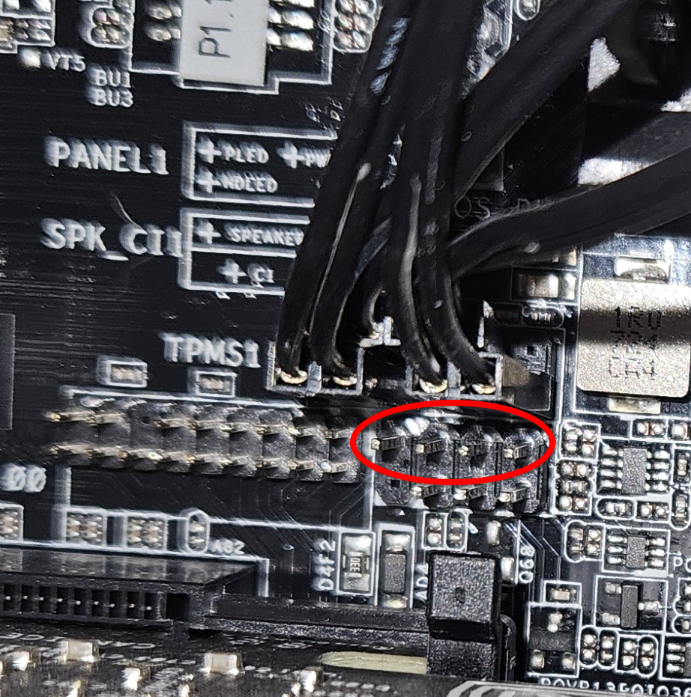

Do the lights provide the same info as the beeps?

Yes, but whether you have lights or just beeps depends on your board. I think yours does not have the lights, just the speaker pins.

So the circle is where a little speaker should be attached:

Sometimes these come with the case, but in your case not apparently or the PC guy would have attached it. You can buy these pretty cheap (one or two bucks) and they look like this:

When you have one attached and start the PC the mainboard will run some tests, and if it detects a problem there will be a pattern of beeps coming out of the speaker. You can look up what this pattern means in the handbook somebody linked above.

play on bad private servers i guess

Oh damn, I ate too many selfhosted letters, I’m afraid I’ll have to vomit.

Vanilla vmangos

TBC cmangos

WotLK AzerothCore TrinityCore

Haaaa, I think it’s finally over…

SinglePlayerProject

Urgh. Sorry. Nothing to see here, move along everybody!

If you rise anywhere above lever 5 or so, the difficulty ratchets up so much it makes the main quest nearly impossible to complete.

Didn’t Oblivion already have the difficulty slider? You could just adjust that, no?

I know level scaling is a big topic in the industry, but for me, the way it’s implemented nearly ruins what is otherwise a mostly great game.

Two of the first RPGs I played were Gothic and Gothic II which released approximately alongside Morrowind and Oblivion, and they just had no dynamic level scaling at all, so I don’t really see the appeal either. A tiny Mole Rat being roughly the same challenge as a big bad Orc just breaks immersion. If you were to meet the latter in early game it would just curb stomp you, which provided an immersive way of gating content and a real sense of achievement when you came back later with better armour and weapons to finally defeat the enemy who gave you so many problems earlier. Basically the same experience you had with Death Claws in Fallout New Vegas when compared to Fallout 3 - they aren’t just a set piece, they are a real challenge.

The games had their own problems, for example the fighting system sucked, and I’m told the English translation was so bad the games just flopped in the Anglosphere, putting them squarely in the Eurojank category of games. But creating a real sense of progression and an immersive world were certainly not amongst their weaknesses.

pandoc.org is probably what you are looking for, but you might have to create a custom reader/writer or find one on the internet.

a neural network with a series of layers (W in this case would be a single layer)

I understood this differently. W is a whole model, not a single layer of a model. W is a layer of the Transformer architecture, not of a model. So it is a single feed forward or attention model, which is a layer in the Transformer. As the paper says, a LoRA:

injects trainable rank decomposition matrices into each layer of the Transformer architecture

It basically learns shifting the output of each Transformer layer. But the original Transformer stays intact, which is the whole point, as it lets you quickly train a LoRA when you need this extra bias, and you can switch to another for a different task easily, without re-training your Transformer. So if the source of the bias you want to get rid off is already in these original models in the Transformer, you are just fighting fire with fire.

Which is a good approach for specific situations, but not for general ones. In the context of OP you would need one LoRA for fighting it sexualising Asian women, then you would need another one for the next bias you find, and before you know it you have hundreds and your output quality has degraded irrecoverably.

Depressingly, the message that GHG emissions are heating up the planet has been passed down for over a hundred years now. People just aren’t very good with passed down messages in general.

Yeah but that’s my point, right?

That

Meaning that when you change or remove the LoRA (A and B), the same types of biases will just resurface from the original model (W). Hence “less biased” W being the preferable solution, where possible.

Don’t get me wrong, LoRAs seem quite interesting, they just don’t seem like a good general approach to fighting model bias.

First, there is no thing as a “de-biased” training set, only sets with whatever target series of biases you define for them to reflect.

Yes, I obviously meant “de-biased” by definition of whoever makes the set. Didn’t think it worth mentioning, as it seems self evident. But again, in concrete terms regarding the OP this just means not having your dataset skewed towards sexualised depictions of certain groups.

- either you replace data until your desired objective, which will reduce the model’s quality for any of the alternatives

[…]

For reference, LoRAs are a sledgehammer approach to apply the first way.

The paper introducing LoRA seems to disagree (emphasis mine):

We propose Low-Rank Adaptation, or LoRA, which freezes the pre-trained model weights and injects trainable rank decomposition matrices into each layer of the Transformer architecture, greatly reducing the number of trainable parameters for downstream tasks.

There is no data replaced, the model is not changed at all. In fact if I’m not misunderstanding it adds an additional neural network on top of the pre-trained one, i.e. it’s adding data instead of replacing any. Fighting bias with bias if you will.

And I think this is relevant to a discussion of all models, as reproduction of training set biases is something common to all neural networks.

“Inclusive models” would need to be larger.

[citation needed]

To my understanding the problem is that the models reproduce biases in the training material, not model size. Alignment is currently a manual process after the initial unsupervised learning phase, often done by click-workers (Reinforcement Learning from Human Feedback, RLHF), and aimed at coaxing the model towards more “politically correct” outputs; But ultimately at that time the damage is already done since the bias is encoded in the model weights and will resurface in the outputs just randomly or if you “jailbreak” enough.

In the context of the OP, if your training material has a high volume of sexualised depictions of Asian women the model will reproduce that in its outputs. Which is also the argument the article makes. So what you need for more inclusive models is essentially a de-biased training set designed with that specific purpose in mind.

I’m glad to be corrected here, especially if you have any sources to look at.

Nah, this one was a direct link on purpose. But the edit box swallowed the @lemmy.ca part at the end due to trying user name auto-completion, so thanks for making me re-read the post. Good bot!

Ah with that link it’s easy to track down what happened.

First you go to the community on the server in question: https://lemmy.world/c/alberta@lemmy.ca

Then you click on Modlog in the sidebar: https://lemmy.world/modlog/3835

And since there is pretty much nothing in it we immediately see the entry for your post saying:

reason: Deceptive content. Calling to abuse government system.

Note that when you compare your servers Modlog that entry is missing there, so yes, only removed for people connecting through lemmy.world.

Not sure how appeals work there, you can probably reply to the account that notified you, or go to the !support@lemmy.world community.

Is some decade(s) old post of mine from some old forum really still floating around somewhere out there on some random old server chugging along?

The No Such Agency probably has a copy in its data centre in Utah. Other nation state actors probably have one as well if it’s the singular and not the plural (decade).

Deforming on time scales longer than a human life, so that’s why you wouldn’t see it. It might indeed be an urban legend, I don’t know, but given the claims in the article I cited I wouldn’t entirely discount the possibility.

Their ultimate fate, in the limit of infinite time, is to crystallize.

Alright, but the article is talking about long to infinite timescales.

Long to infinite timescales for it to crystallise, that is to solidify. This is explicitly noted in the abstract of the paper the article is based on. I understood the “short timescales” on which it “relaxes towards the liquid state” to mean more than one human life time based on figure 4 (the image also shown in the article), but not so sure about the 10ky cited in the OP though.

The discussion above was about church windows and that is not caused by glass flowing.

Yeah that might indeed be an urban legend, could be manufacturing artefacts as claimed here. However I will note that the version of it I am familiar with isn’t about “bull’s eye marks, warps, and lines” as that article states, but specifically about old windows being thicker only at the bottom.

Heat in this context means any temperature above -273.15°C. Steel doesn’t display liquid properties at “room temperature”, glass does.

It’s a matter of debate, but it definitely isn’t a solid.

A more elaborate alternative definition is this: “Glass is a non-equilibrium, non-crystalline condensed state of matter that exhibits a glass transition. The structure of glasses is similar to that of their parent supercooled liquids (SCL), and they spontaneously relax toward the SCL state. Their ultimate fate, in the limit of infinite time, is to crystallize.” […]

“On the other hand, any positive pressure or stress different from zero is sufficient for a glass to flow at any temperature,” he said. “The time it takes to deform depends mainly on temperature and chemical composition. If the temperature to which glass is submitted is close to zero Kelvin [absolute zero], it will take an infinitely long time to deform, but if it is heated, it will at once begin to flow.”